I followed this tutorial and everything seems to be okay until I came to Step 21.

“Save the changes and restart the affected services. These changes need a restart of Spark2 service. Ambari UI will prompt a required restart reminder, click Restart to restart all affected services.”

After I initiated the restart, one of the items in the restart dialog (“run_customscriptaction”) seems to have failed.

I clicked into the “Run Customscriptaction Actionexecute” screen and this is the error log:

The stdout is quite large, but the bottom of it looks like this

statsmodels-0. 99% |############################## | Time: 0:00:00 76.77 MB/s

statsmodels-0. 99% |############################## | Time: 0:00:00 76.78 MB/s

statsmodels-0. 99% |############################## | Time: 0:00:00 76.80 MB/s

statsmodels-0. 100% |###############################| Time: 0:00:00 76.80 MB/s

statsmodels-0. 100% |###############################| Time: 0:00:00 76.72 MB/s

anaconda-2020. 0% | | ETA: --:--:-- 0.00 B/s

anaconda-2020. 100% |###############################| Time: 0:00:00 39.12 MB/s

anaconda-2020. 100% |###############################| Time: 0:00:00 27.61 MB/s

('Start downloading script locally: ', u'https://raw.githubusercontent.com/TheEagleByte/azure-hail/master/hail-install.sh')

Fromdos line ending conversion successful

('Unexpected error:', "('Execution of custom script failed with exit code', 1)")

Removing temp location of the script

Command failed after 1 tries

It seems that the installation script is looking for a particular pip that was not installed. Do you think a misconfiguration causes this?

Thanks a lot!

@kumarveerapen

I wonder if @IqviaGarrettBromley could chime in on this since he has had experience in setting up Hail on Azure.

1 Like

Hi @xunzhustjude,

Can you share a screenshot or something of the script actions page for the cluster in the Azure dashboard? That is where they should have executed and displayed a status of successful/failed.

Also, at the moment, not sure I would consider setting up in Azure, as there is a known issue where you are unable to write any hail table or matrix table to the ADLS Gen 2 storage. You will receive errors regarding “the stream has already been closed”. The last working version on Azure is 0.2.34, and with 0.2.35 there were ~2.6k lines of changes around the io package where the bug was introduced.

If you have the option to, I would recommend moving to a different cloud provider, or use that version (which I wouldn’t necessarily recommend).

Thanks for your help, @IqviaGarrettBromley!

Can you share a screenshot or something of the script actions page for the cluster in the Azure dashboard?

When clicking into the failed action:

I attached the entire debug information here: debug_information.txt (6.2 KB)

If you have the option to, I would recommend moving to a different cloud provider, or use that version (which I wouldn’t necessarily recommend).

Currently Azure is what we prefer because it is a close collaborator of St. Jude. However, I will discuss with our team members regarding the possibility of switching to another cloud provider for Hail.

Sorry for making multiple posts, but it seems that as a new user I am not able to upload more than one picture per post.

Thanks for the info. I just used that script last month, so not sure why it’s suddenly throwing an error. Can you show what the cluster configuration looks like? Versions and storage options and such?

@IqviaGarrettBromley

Thanks for helping!

The Cluster type as shown on Azure is: Spark 2.4 (HDI 4.0)

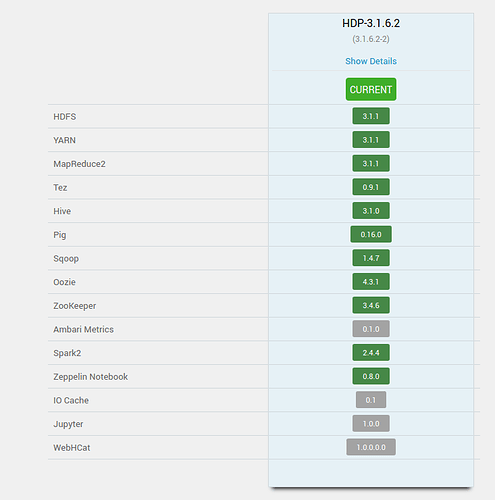

Here are the rest of the versions

Which “configuration” file do you need?

@kumarveerapen Sorry Kumar, I was distracted by something else for the past few days. I’m coming back to this now.

1 Like